Knowledge distillation into skills via feedback loops

Agents know syntax. They don’t know taste. You can’t fix that by writing better prompts. You fix it by distilling your knowledge into a skill - and the best way to distill is through a feedback loop.

I built charts-cli to prove this. Feed it an ECharts JSON config, get back SVG or PNG. 12 chart types, one pipe.

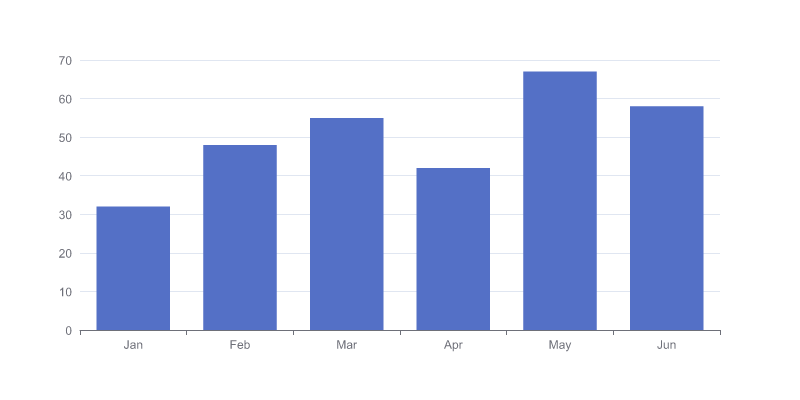

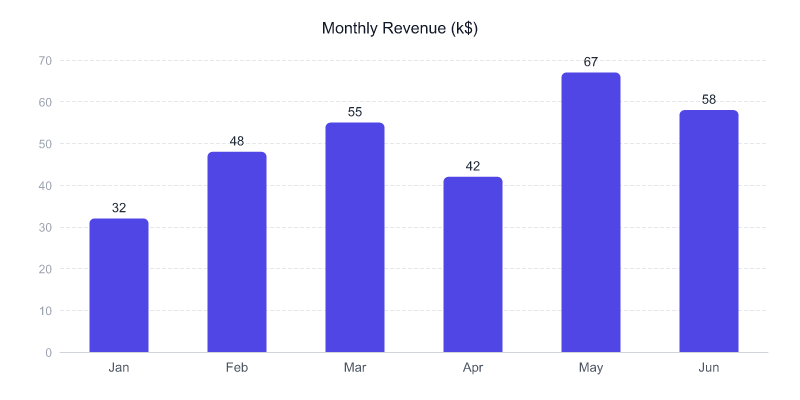

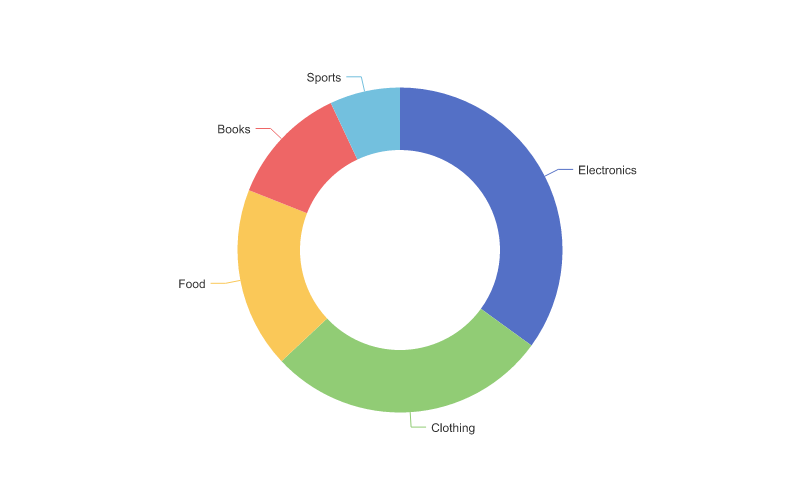

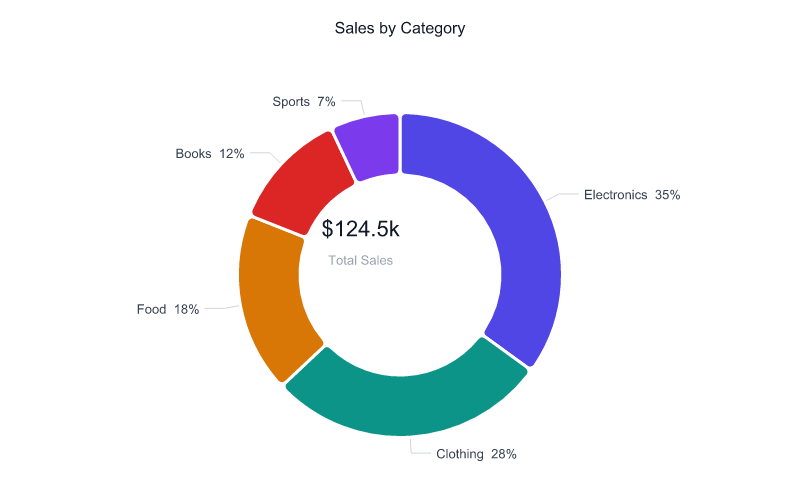

echo '{"series":[{"type":"bar","data":[10,20,35]}]}' | charts render -o chart.pngThe CLI worked immediately. The output looked terrible. Here’s what an agent produces without a skill vs. with one:

Left: default ECharts. Right: with a 213-line skill. Same data, same chart types.

The model knows ECharts syntax. It has no taste. The skill on the right is 213 lines of distilled aesthetic knowledge. I didn’t write it from memory. I extracted it through a feedback loop.

The distillation loop

The process:

- Write a rule (or guess one)

- Agent renders a chart using the skill

- Look at the output

- Fix the skill

- Repeat

That’s it. Each cycle distills one piece of tacit knowledge into an explicit rule.

First render: transparent background, invisible on dark viewers. Added backgroundColor: "#ffffff". Second render: default ECharts blue, looks dated. Picked a palette: #4f46e5, #0d9488, #d97706, #dc2626, #7c3aed, #0891b2. Third render: bars look flat. Added borderRadius: [5,5,0,0]. Fourth render: no value labels. Added label.show: true, position: "top".

Each render surfaced exactly one problem. Each fix distilled one more thing I knew but hadn’t articulated into the skill.

Knowledge that only exists through use

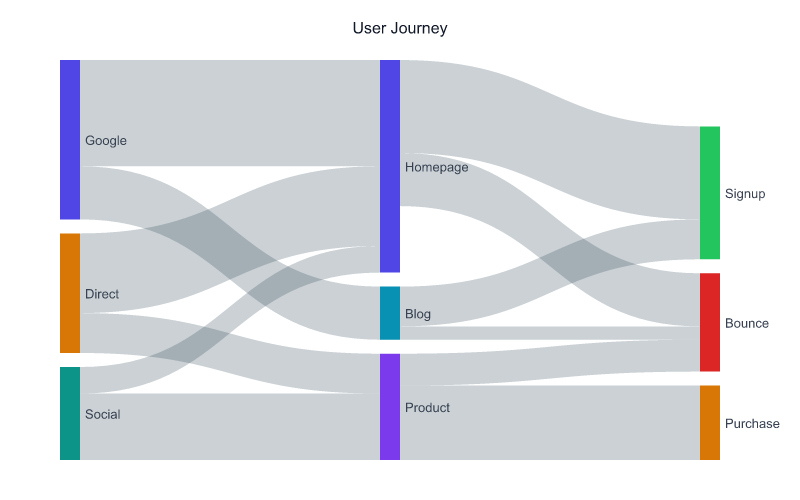

Some rules aren’t in any documentation. They can only be distilled by running the loop:

- Candlestick charts need

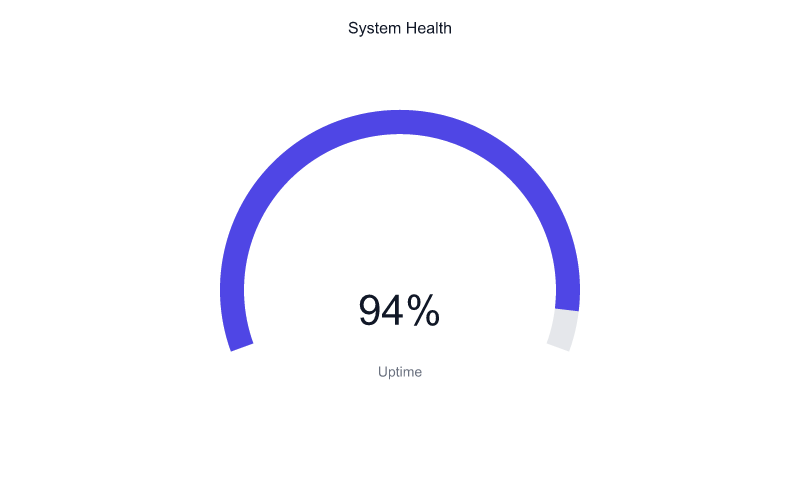

yAxis.scale: true. Without it, the axis starts at 0 and candles in the 140-155 range become invisible slivers. - Pie/donut charts need

-W 800 -H 500(taller than default) or labels get clipped. - Gauge charts look best as progress arcs, not classic needles.

progress.show: true,pointer.show: false, hide all ticks and labels, big center value. - Heatmaps need extra right margin (

grid.right: 120) or the visualMap legend overlaps the chart.

No amount of reading ECharts docs would have surfaced these. This knowledge only exists through use. The feedback loop is how you capture it.

Distill further

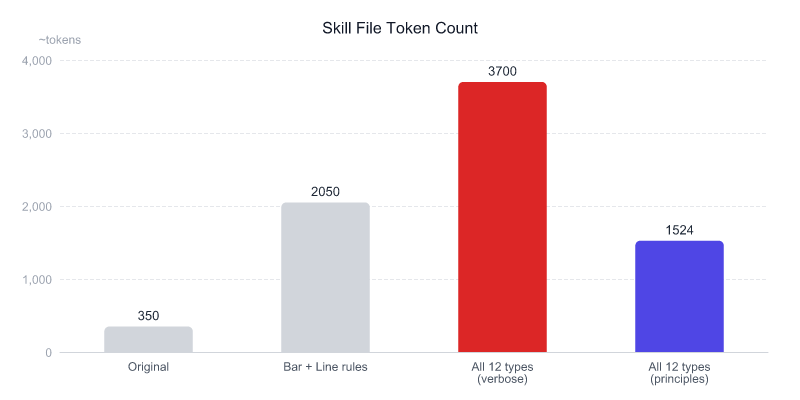

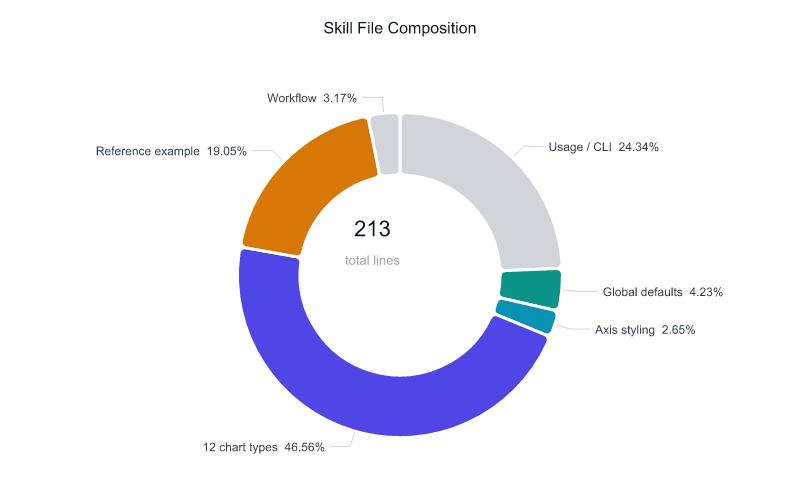

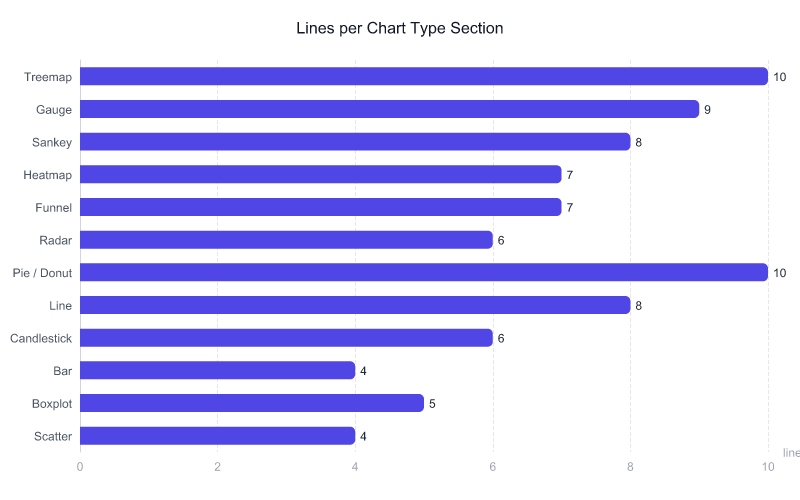

After covering all 12 chart types, the skill was 587 lines and ~3,700 tokens. I’d distilled the knowledge, but I hadn’t compressed the expression.

The model already knows ECharts. It can run charts schema bar to get the config structure. What it needs from the skill is opinions - the specific values, the non-obvious gotchas, the aesthetic choices. Everything else is noise eating the context window.

So I distilled again - from verbose JSON blocks to bullet-point principles. This is the entire bar section:

### Bar

- barWidth: "50%", rounded top corners borderRadius: [5,5,0,0]

- Value labels on top: label.show: true, position: "top",

color #1f2937, fontSize 13, fontWeight boldTwo lines. The model composes the full JSON from this plus the schema.

587 to 213 lines. ~3,700 to ~1,500 tokens. 59% smaller.

Validate

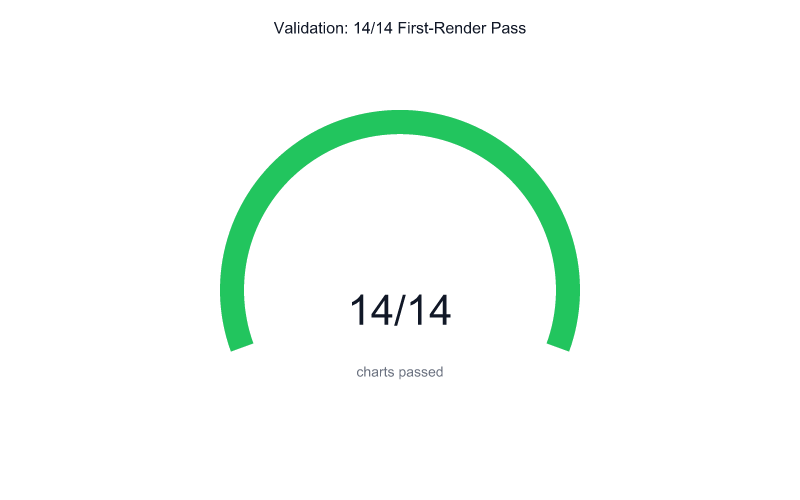

Compressing is scary. Did I cut too much? To find out, I used nanny - a task orchestrator that breaks a goal into sub-agents. I gave it one job: build every chart variant from the trimmed skill alone. 14 sub-agents, 14 chart types, each reading only the compressed skill and charts schema.

Every single one produced a clean chart on the first render.

The distilled principles were enough. The verbose examples were never needed.

The output

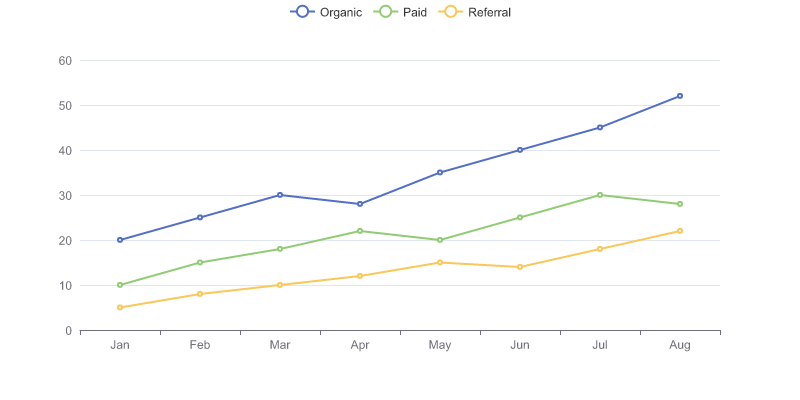

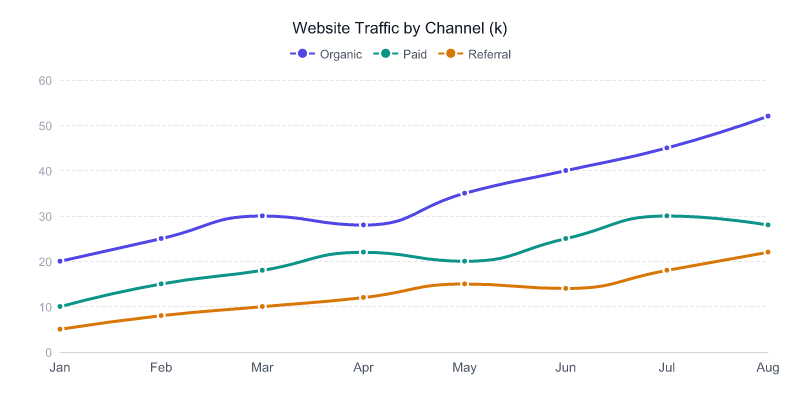

The full range - all generated by agents reading the same 213-line skill:

Get it

npm install -g charts-cliTo install the skill with the design principles from this post:

npx skills add Michaelliv/charts-cliThe source is on GitHub. MIT licensed. Works with any agent that can run CLI commands - Claude Code, Pi, Codex, whatever.

The takeaway isn’t about charts. It’s about distillation. You have knowledge the model doesn’t - taste, opinions, hard-won gotchas. A feedback loop extracts it, one render at a time. Compression purifies it. Validation proves it. The result is a skill that’s small, dense, and better than anything you could have written from scratch.